AI Washing Becomes the New Greenwashing: The $1.5 Billion Collapse of Builder.ai

As artificial intelligence becomes increasingly integrated into corporate operations and investment strategies, the collapse of Builder.ai offers a cautionary lesson for investors and business leaders alike.

In the frothy years of artificial-intelligence exuberance, investors poured billions into companies promising to automate the future. One of the most celebrated was Builder.ai, a London-based startup that claimed its AI platform could build custom software applications with minimal human intervention.

By 2025, the company carried a reported valuation of $1.5 billion and had positioned itself as a pioneer of “no-code” AI-driven development. This week, it entered liquidation.

The trigger was a reported $37 million seizure by creditors. The underlying cause, according to emerging disclosures and creditor filings: inflated revenue figures, mounting debt, and a widening gap between the company’s marketing narrative and operational reality. Far from being powered primarily by autonomous AI systems, Builder.ai was reportedly relying on hundreds of human engineers to perform much of the work it described as automated.

The episode may prove more than a startup flameout. For institutional investors, it raises a larger question:Is “AI washing” becoming the new greenwashing?

Know Your AI Providers

Builder.ai marketed itself as an AI-native platform capable of automating the software development lifecycle. Promotional materials suggested that customers could assemble sophisticated applications as easily as configuring products in an online store—an industrialization of coding through artificial intelligence.

The pitch resonated in a market hungry for productivity gains. Corporate clients sought cost efficiencies; venture investors sought exposure to scalable AI infrastructure; private markets rewarded companies that could plausibly claim technological defensibility.

Valuations reflected that optimism. Like many AI-adjacent startups, Builder.ai benefited from a capital environment in which growth narratives often outpaced technical scrutiny.

But the core value proposition—automation replacing manual labor at scale—appears to have been overstated. According to individuals familiar with the company’s operations, significant portions of the development work were executed manually by human engineers, with AI playing a more limited role than advertised.

The difference is not semantic. In the AI economy, the distinction between tool-assisted productivity and genuine automation is material to margins, scalability, and long-term valuation.

Revenue, Debt and Disclosure

Compounding the operational concerns were allegations of inflated revenue reporting. Creditors have cited discrepancies between projected growth and realized cash flows. The company also carried substantial debt obligations, which tightened as liquidity pressures mounted.

The reported $37 million seizure by creditors appears to have accelerated the collapse. Once key assets were frozen, the company’s ability to continue operating deteriorated rapidly, culminating in liquidation proceedings.

For investors, the more consequential issue is governance. If revenue figures were materially inflated, questions will inevitably follow:

- What controls were in place around financial reporting?

- How rigorous was board oversight?

- What due diligence did investors conduct into the technical claims underpinning the business model?

The answers will matter not only for Builder.ai’s stakeholders, but for the broader AI investment landscape.

AI Washing: A Familiar Pattern

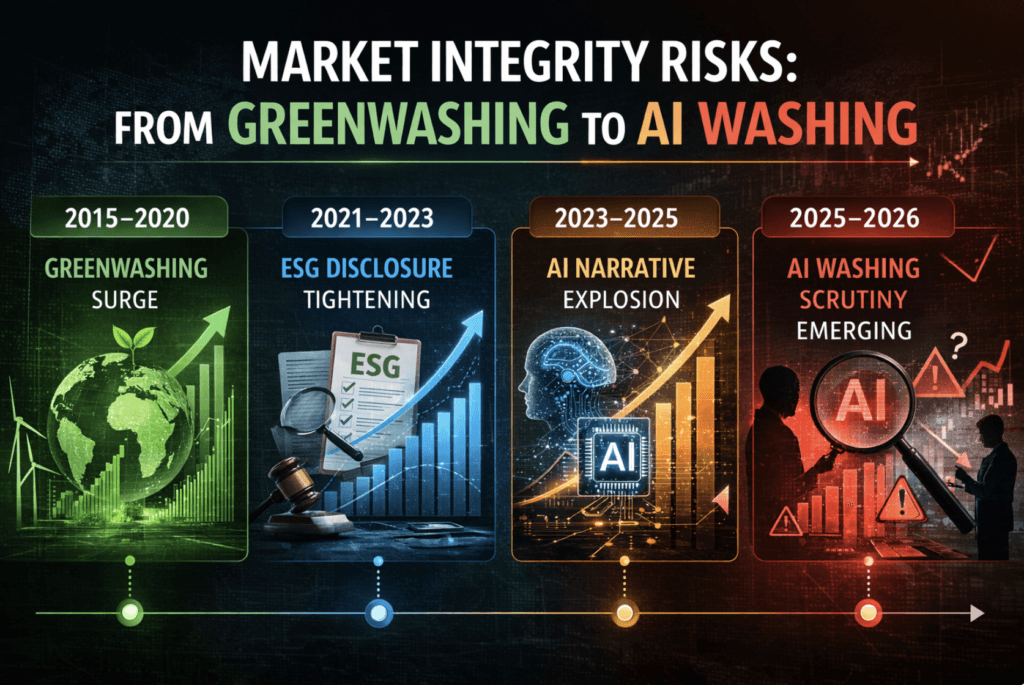

The ESG movement of the past decade was marked by a wave of “greenwashing”—companies overstating sustainability credentials to capture capital inflows. Regulators eventually responded with tighter disclosure requirements, assurance standards, and enforcement actions.

The Builder.ai case suggests that the AI sector may be entering a similar phase.

AI washing typically takes one of three forms:

- Exaggerating the autonomy or intelligence of systems that rely heavily on human input.

- Labeling traditional software tools as AI to attract valuation premiums.

- Projecting scalability assumptions that depend on automation not yet achieved.

In an environment where AI multiples often exceed those of conventional software firms, the incentive to blur distinctions can be powerful.

Yet for institutional investors, the risks are concrete. Overstated automation translates into compressed margins. Inflated revenue narratives distort valuation models. Weak governance erodes exit pathways.

Governance as a Valuation Driver

The collapse underscores a broader shift: AI governance is becoming a core financial variable. Institutional allocators are increasingly examining:

- Board-level AI literacy

- Technical auditability of AI systems

- Transparency around human-in-the-loop processes

- Clear revenue recognition policies tied to actual automation metrics

In private markets, due diligence is evolving to include independent technical validation of AI claims. In public markets, analysts are beginning to scrutinize whether “AI-enabled” revenue reflects genuine algorithmic output or conventional labor rebranded with modern terminology.

For creditors, the lesson is equally sharp. Debt structures built on high-growth automation narratives may prove fragile if those assumptions fail.

A Market Inflection Point

Builder.ai’s liquidation does not signal a collapse of the AI sector. On the contrary, enterprise adoption of machine learning and automation continues to expand. But it may mark the end of a phase in which narrative alone could sustain billion-dollar valuations.

As regulators in the U.K., the EU and the U.S. sharpen oversight of AI deployment—particularly around transparency and accountability—disclosure standards are likely to tighten. Investors may demand clearer distinctions between AI-assisted services and AI-driven infrastructure.

The governance pillar of ESG—often overshadowed by environmental metrics—could become central to AI-era capital allocation.

For institutional investors, the takeaway is not to retreat from AI exposure. It is to interrogate it.

- What proportion of revenue is truly automated?

- What technical audits validate AI performance claims?

- What controls prevent revenue inflation?

- How resilient is the capital structure under stress?

In the wake of Builder.ai’s fall, those questions may become standard practice.

If greenwashing defined the last decade’s reputational risk cycle, AI washing may define the next governance test for capital markets.

Subscribe to ESG News’s Daily Newsletter

Matt Bird is the Founder, CEO, and Editor-in-Chief of ESG News. He brings 25 years of experience in corporate strategy, media, fintech, and communications, including 15 years specializing in news and journalism. Matt was recognized by the United Nations as #3 of the “Top 10 Most Influential Media Executives for Impact” in 2015 during the launch of the UN SDGs.

He has advised the Sustainable Stock Exchange initiative (SSEI), UNCTAD, and the UN, and hosts event coverage at the World Economic Forum, ADFW, Climate Week NYC, EU Parliament, COP, the Vatican, NASDAQ, NYSE, and more. Matt is a founding board member of the Humanity 2.0 Foundation, a Vatican-based NGO focused on identifying and removing impediments to human flourishing.

He previously rang the NASDAQ Closing Bell in honor of his partnership with NASDAQ OMX to launch the world’s first retail investor targeting and newswire monitoring platform with the NASDAQ Financial Services Group. Matt launched ESG News in 2021, leading coverage of more than 10,000 news stories as of 2026—and truly loves what he does.